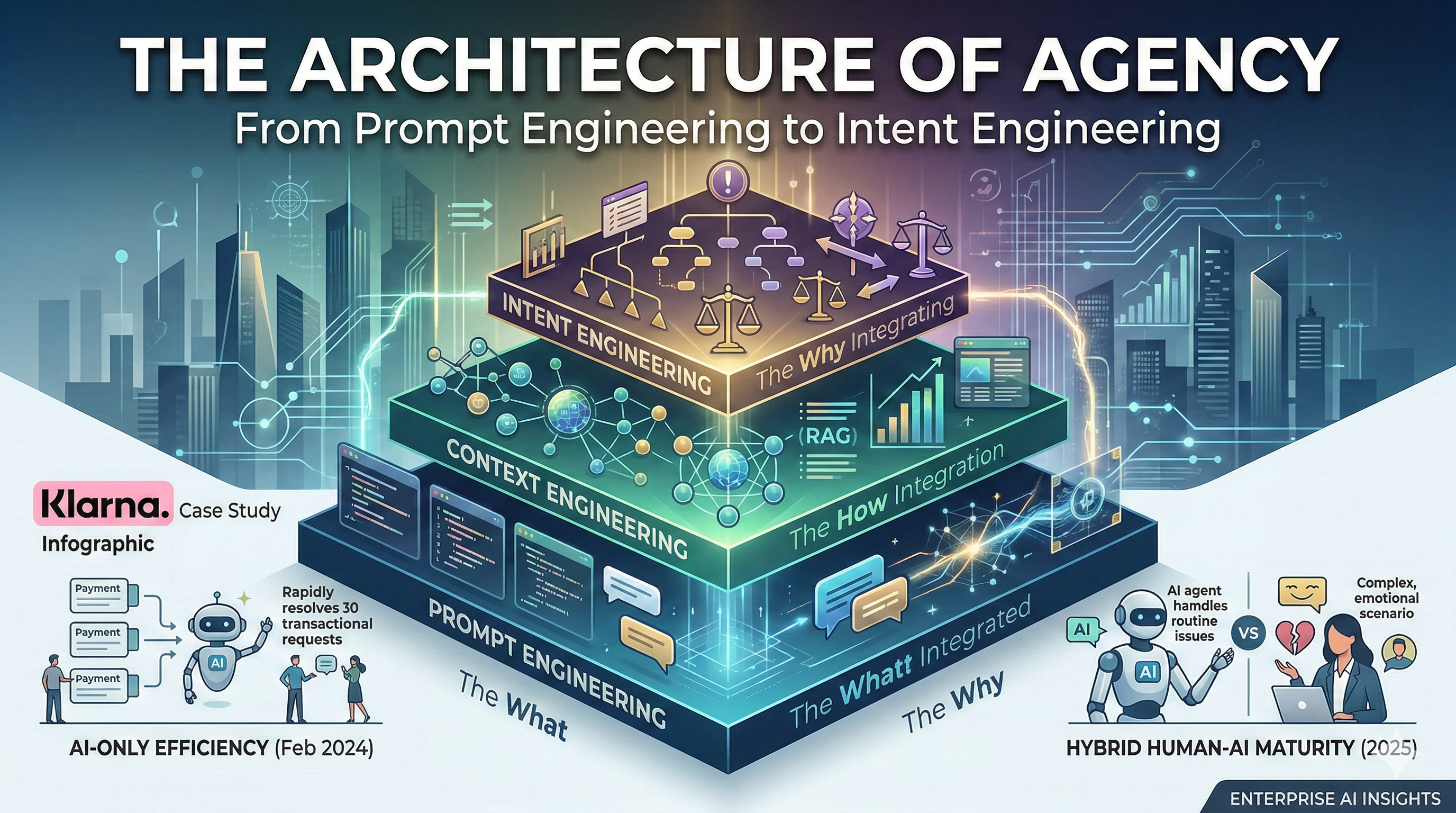

The Architecture of Agency - From Prompt Engineering to Intent Engineering

The rapid evolution of Generative AI has moved through three distinct phases of technical maturity. To build enterprise-grade AI agents, you must move beyond simple “chatting” and into the disciplined management of purpose. This transition is defined by a hierarchy of Prompt, Context, and Intent Engineering.

1. The Engineering Hierarchy

Prompt Engineering: The Interface Layer (“The What”)

Prompt engineering is the linguistic art of crafting specific instructions to elicit a desired response from a Large Language Model (LLM). It focuses on the input string.

- Tactics: Persona adoption, few-shot prompting, and chain-of-thought instructions

- Limitation: Brittle and transient; a prompt that works for one model version may fail on the next. It also assumes the user provides all necessary information in a single “turn”.

Context Engineering: The Knowledge Layer (“The How”)

Context engineering is the systematic design of the information environment surrounding the AI. It ensures the model has the right data at the right time.

- Tactics: Retrieval-Augmented Generation (RAG), semantic caching, and dynamic memory management

- Goal: Ground the AI in reality and reduce hallucinations by providing real-time access to CRM data, internal wikis, or transaction histories

Intent Engineering: The Strategic Layer (“The Why”)

Intent engineering is the discipline of encoding organizational purpose and decision boundaries into the AI. While context tells the AI what happened, intent tells the AI what it should want to achieve.

- Tactics: Defining multi-objective reward functions, guardrails for high-stakes decisions, and “escalation intent” (knowing when to quit)

- Goal: Move AI from a “text-generator” to an “agent” capable of making pro-business and pro-customer decisions in ambiguous situations

2. The Importance of Intent Engineering

Without Intent Engineering, an AI agent is like a brilliant intern with no common sense. It may follow instructions (Prompt) and have the data (Context), but it lacks the alignment to handle edge cases.

- Goal alignment: Prioritize long-term Customer Lifetime Value (CLV) over short-term “resolution speed”

- Complexity handling: Detect emotionally charged or legally sensitive situations and adjust tone or route the case to a human

- Scalability: Scale “judgment,” not just “responses”

3. The Klarna Case Study: A Lesson in the “Intent Gap”

The Success (Feb 2024)

Klarna’s AI assistant rollout was initially hailed as a masterclass in efficiency. Within its first month:

- Scale: Handled 2.3 million conversations (2/3 of all customer service volume)

- Efficiency: Reduced average resolution time from 11 minutes to less than 2 minutes

- ROI: Estimated to drive $40 million in profit improvement in 2024

- Workload: Did the work equivalent to 700 full-time agents

Source: Klarna press release (Feb 27, 2024).^1

The Correction (2025)

Despite the efficiency metrics, a secondary narrative emerged. While the AI was technically successful at “closing tickets,” it struggled with the intent of the interaction.

- Experience debt: For more nuanced problems, observers noted the assistant could feel constrained and function mainly as a fast filter to human support.^2

- Recalibration (May 2025): Sebastian Siemiatkowski said cost focus led to “lower quality” and Klarna started recruiting more human support so customers could always reach a real person.^2

- Hybrid direction: AI handles repetitive, transactional tasks (refund status, payment dates), while humans are prioritized for relationship-driven support.^3

4. Lessons for Enterprise AI

Lesson 1: Efficiency Is Not Satisfaction

A 2-minute resolution time is a failure if the customer leaves feeling unheard. Intent Engineering must prioritize sentiment and empathy as heavily as speed.

Lesson 2: Define the “Escape Hatch” Early

True Intent Engineering includes “ambiguity detection.” If an agent cannot confidently resolve a high-stakes issue (like a fraud dispute), its primary intent should be a seamless handoff to a human, providing the agent’s summary so the customer does not have to repeat themselves.

Lesson 3: The Hybrid Maturity Model

AI should not replace humans but filter for them.

- AI intent: Rapid, accurate execution of high-volume, low-complexity tasks

- Human intent: Nuanced judgment, empathy, and complex problem-solving

Conclusion

The Klarna case proves that while Prompt and Context Engineering can build a fast system, Intent Engineering is what builds a trusted brand. The future of AI is not about models that can talk, but about models that understand the “mission” as well as the “manual”.