The Unified Backend: A Technical and Philosophical Analysis of Motia and the Era of AI-Driven Orchestration

The Crisis of Fragmentation in Modern Systems

The evolution of software engineering has reached a critical inflection point where the sheer complexity of the modern backend stack is beginning to yield diminishing returns on developer productivity. In the decade leading up to the mid-2020s, the industry moved from monolithic architectures to microservices, and subsequently to serverless functions, each transition promising greater scalability and isolation. However, these transitions inadvertently birthed a state of extreme fragmentation. Current backend development is characterized by a "fragmentation tax," where the simple act of building a feature requires the orchestration of disparate tools: Express or NestJS for REST APIs, BullMQ or Celery for background workers, Redis for caching and state, Temporal for durable workflows, and specialized Python runtimes for the burgeoning field of artificial intelligence.1

This fragmentation necessitates a unified foundation. The fundamental argument presented by the Motia framework is that software engineering is currently splintered across too many isolated runtimes and frameworks.4 When an API lives in one framework and a background job in another, the system's global state and observability become brittle. The "glue code" required to connect these services often exceeds the complexity of the business logic itself. Motia Dev, a code-first backend framework, addresses this by unifying APIs, background jobs, queues, workflows, and AI agents around a single core primitive: the Step.5 By collapsing these distinct categories into a unified runtime, the framework aims to redefine the "full stack" for 2026 and beyond.8

The Genesis of Motia: Mike Piccolo and the Resilience Model

The conceptual origin of Motia is inextricably linked to the professional trajectory and philosophy of its creator, Mike Piccolo.8 Piccolo, who serves as the founder of Motia and a co-founder and board member at FullStack.com, brings a pragmatic perspective to backend engineering that is rooted in his pre-technology background.8 Before building AI infrastructure, Piccolo spent time sweeping construction sites, an experience he cites as foundational to his understanding of resilience and the "pain endurance" required to build startups.8

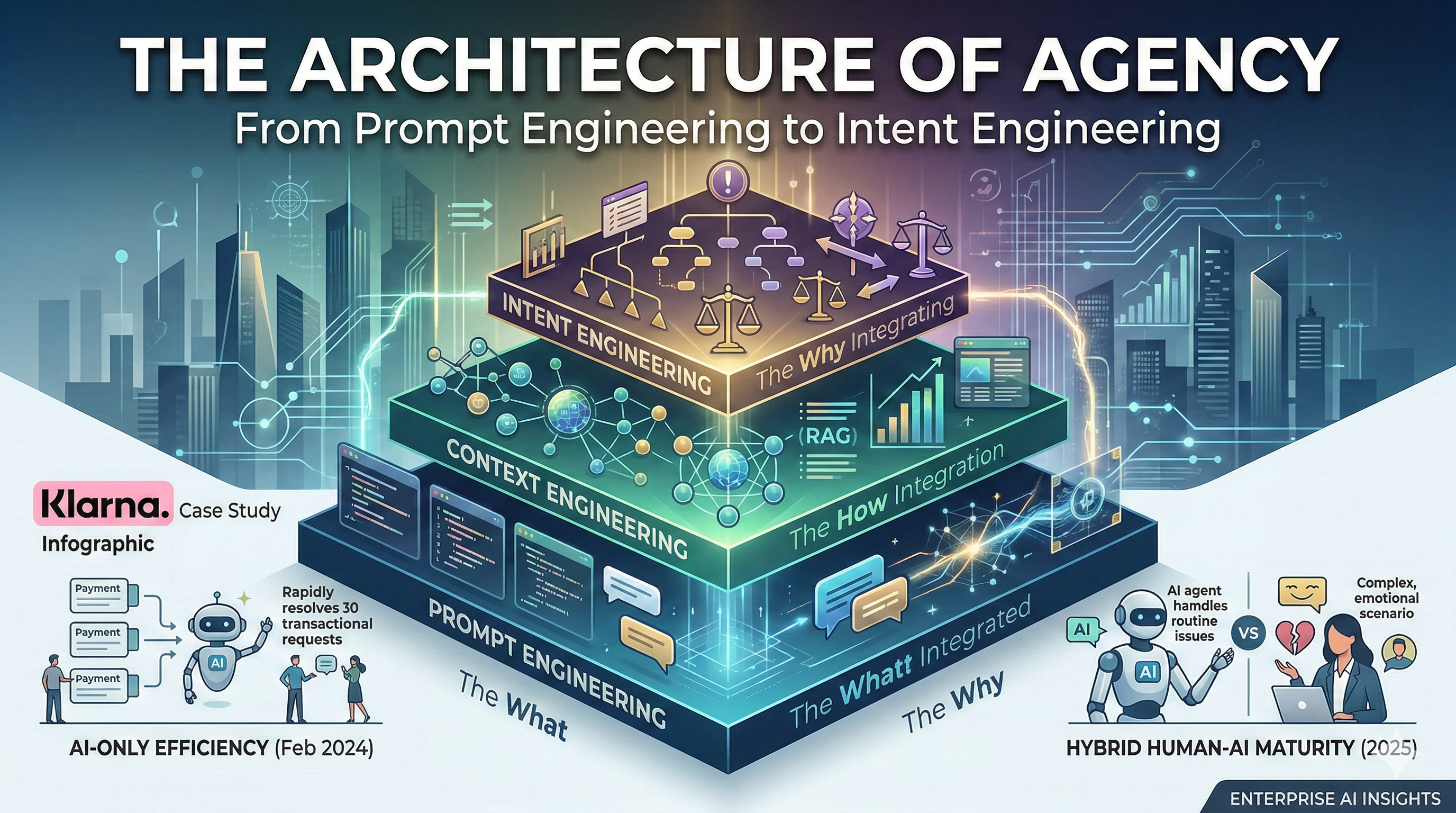

Piccolo’s vision for Motia is driven by a recognition of a paradigm shift in the engineering role. He argues that we are moving from "Framework Wars"—characterized by debates over syntax and structure—to "Orchestration Wars," where the primary value lies in connecting deterministic code with stochastic reasoning from Large Language Models (LLMs).8 In this context, Piccolo posits that the title of "AI Engineer" is often meaningless unless it addresses the underlying system fragility.8 If an AI agent enters an infinite loop or an LLM provider goes down, the backend must be resilient enough to handle the failure without manual intervention.

The framework is thus a reflection of Piccolo's belief that a "chip on your shoulder" and the ability to build resilient infrastructure matter more than a traditional computer science degree.8 Motia is designed to solve the "Zero-Cost Crisis," a term Piccolo uses to describe the collapse of software production costs due to AI, which shifts the economic leverage of developers from writing code to orchestrating complex, autonomous systems.8

The Motia Manifesto: Unification as a Core Principle

The guiding philosophy of the framework is articulated in the Motia Manifesto, which declares that the future of backend development belongs to multi-language, natively asynchronous, and event-driven systems.1 The manifesto identifies that while current teams piece together half a dozen tools to write a single feature, the real challenge is not merely technical integration but the creation of an elegant abstraction that becomes invisible to the developer.1

Drawing inspiration from React’s "component," the manifesto introduces the "Step" as the backend’s core primitive.1 The argument is that just as React simplified frontend development by abstracting the complexities of the Document Object Model (DOM), Motia redefines the backend by abstracting the complexities of infrastructure—including message brokers, retry logic, and state persistence.1 The manifesto sets forth a declaration that the backend must mirror the distributed, concurrent, and reactive nature of the real world.1

The value proposition of the manifesto is centered on four distinct pillars:

- Developer Experience: Utilizing unified tooling, type safety, and hot reloading across different programming languages to eliminate context switching.1

- Speed and Velocity: Allowing developers to move from prototype to production in minutes by providing a unified environment that eliminates infrastructure setup.1

- Versatility: Enabling the construction of everything from simple REST APIs to complex AI agents within the same framework, using a polyglot design.1

- Reliability: Embedding enterprise-grade observability, fault tolerance, and automatic retries directly into the runtime to replace manual queue configurations.1

| Manifesto Pillar | Objective | Implementation Mechanism |

|---|---|---|

| Unified Primitives | Eliminate fragmentation | The "Step" model for all backend patterns |

| Polyglot Design | Use the best tool for the job | Independent TS, JS, and Python runtimes |

| Event-Driven Core | Ensure high resilience | Native message queue and pub/sub architecture |

| Observability | Reduce debugging latency | Integrated Workbench for tracing and logging |

The Core Primitive: Anatomy of a Step

The architectural brilliance of Motia lies in its radical simplification of backend patterns. Every action, whether it is an HTTP request, a scheduled task, or an event-driven worker, is expressed as a "Step".5 A Step is technically a discrete file—identified by suffixes such as .step.ts, .step.js, or _step.py—that the Motia framework automatically discovers and registers without the need for manual imports or registration.5

Structural Components of the Step

A Step is divided into two fundamental sections: the Config and the Handler.5 The Config is a declarative object that defines the "when" and "how" of the execution. This includes the trigger type (API, queue, cron), validation schemas (such as Zod for TypeScript), and event emissions.5 The Handler is the functional body where the business logic resides. It receives a context object that provides the Step with "superpowers," such as the ability to interact with the global state, emit events to other Steps, or stream data to a frontend client in real-time.5

This design allows for an unprecedented level of flexibility. By merely changing the type in the configuration from http to queue, a developer can transform a synchronous API endpoint into an asynchronous background job.4 This "Backend as Code" approach ensures that infrastructure is inferred from logic rather than explicitly managed, a shift that Piccolo believes is necessary for the AI era.12

The Lifecycle of an Execution

The execution of a Step within the Motia framework follows a standardized lifecycle that ensures durability and observability. When a trigger—such as an incoming HTTP POST request—is detected, the Motia runtime identifies the corresponding Step.5 The framework automatically handles input validation, ensuring that the handler only receives data that conforms to the defined schema.6

During the execution of the handler, any side effects, such as writing to the state or emitting an event, are managed by the underlying engine.15 If the handler fails, the framework employs an automatic retry mechanism, which typically defaults to three attempts with configurable backoff policies.1 This "durable execution" ensures that the code will run to completion even if the server crashes or the process is interrupted.19

The iii Engine: The High-Performance Heart of Motia

The operational foundation of Motia is the iii engine, a runtime designed to orchestrate the lifecycle of the framework’s various language-specific SDK processes.6 The engine acts as the "connective tissue" that manages the infrastructure concerns—queues, pub/sub, state storage, stream servers, and cron scheduling—through a single declarative iii-config.yaml file.15

Technical Architecture and IPC

The iii engine adopts an "orchestrator of processes" model.21 For a polyglot application, the engine spawns independent runtimes for TypeScript (via Node.js or Bun) and Python.15 These processes do not communicate directly; instead, they utilize a custom-developed Inter-Process Communication (IPC) solution and a shared infrastructure layer.15 This decoupling ensures that a memory-heavy Python machine learning task does not block the responsiveness of a TypeScript API server.15

There is currently a strategic move to optimize the core of the iii engine by transitioning it from TypeScript/Node.js to a more performance-oriented language like Rust.6 The rationale for this transition is rooted in the significant performance deltas between high-level runtimes and systems-level languages for CPU-bound and memory-intensive tasks.21

| Metric | Performance Comparison (Rust vs. Go) | Impact on Orchestration |

|---|---|---|

| CPU-bound Logic | Rust is |

Reduced latency in complex workflows |

| Memory Intensity | Rust is |

Higher concurrency for AI agents |

| I/O Workloads | Negligible difference | Minimal impact on simple API routes |

| Web Server Throughput | Rust is |

Greater efficiency in the stream layer |

By utilizing Rust, the iii engine can provide the millisecond-level responsiveness required for "Context Engineering" while maintaining the safety of a strongly typed memory model.8 This architectural choice enables the framework to be "cloud-agnostic," as the core binary can be compiled for any major OS or architecture, removing the dependency on heavy Node.js environments for Python-focused developers.21

Infrastructure via Declaration

The iii engine abstracts infrastructure through an adapter-based system. In a development environment, the engine typically defaults to file-based storage for state and local processes for queues.3 However, the same application code can be promoted to production by modifying the config.yaml to utilize Redis for state, RabbitMQ for message queuing, and a distributed stream server.3 This "infrastructure as config" model allows developers to focus entirely on business logic, trusting that the runtime will handle the intricacies of distributed systems.1

Polyglot Runtimes: Bridging the TypeScript/Python Divide

The most significant innovation of Motia is its ability to support multiple languages within the same project and the same workflow.2 This is not merely a "multi-repo" setup, but a unified application where different Steps, written in different languages, share the same state and trigger each other through a common event bus.6

Language Interoperability and AI Integration

In the current technological landscape, AI development is largely dominated by Python, while web development is anchored in the JavaScript/TypeScript ecosystem.12 Motia bridges this divide by allowing a TypeScript Step to handle high-throughput HTTP requests and then enqueue a message for a Python Step to process using specialized libraries like PyTorch or Hugging Face.4

This multi-language workflow is demonstrated in the framework's "Chess Arena" example, where a TypeScript API handles real-time move updates and scoring, while a Python engine integration manages the complex Stockfish chess evaluation.6 The events flow seamlessly between the runtimes:

- TypeScript Step: Receives move via HTTP, validates with Zod, and enqueues move.submitted.

- Python Step: Triggers on move.submitted, evaluates move quality using Stockfish, and emits move.evaluated.

- TypeScript Step: Triggers on move.evaluated, updates the global leaderboard state, and streams the new score to all connected clients.6

| Language | Runtime Status | Primary Role |

|---|---|---|

| TypeScript | Stable | Validation, API routing, stream orchestration |

| JavaScript | Stable | Prototyping, legacy logic |

| Python | Stable | AI agents, LLM tool-calling, data analysis |

| Ruby | Beta | Quick integrations, scripting |

| Go | Soon | High-performance systems logic |

This polyglot capability is a direct response to the "Zero-Cost Crisis" identified by Piccolo.8 By allowing teams to leverage the ecosystem of both languages without the friction of microservices, Motia dramatically lowers the barrier to building intelligent, agent-native software.8

Advanced Features: State Management and Durable Streams

Beyond basic routing and queuing, Motia provides advanced primitives for state and real-time communication that are designed specifically for the unpredictable nature of AI and long-running workflows.6

Persistent State Management

Managing state in a distributed system is traditionally one of the most complex tasks, requiring careful consideration of race conditions and data consistency. Motia simplifies this by providing a persistent key-value storage layer that works across all Steps and languages.15

State in Motia is organized into "groups," which function like folders.25 A Step can set a value in one process and another Step can get that value later, regardless of whether it is written in the same language or running in the same runtime instance.15 The framework also supports atomic updates, such as incrementing or decrementing values, which prevents the "lost update" problem in high-concurrency environments.15 This is particularly useful for tracking progress in parallel tasks or caching expensive AI model outputs.3

Real-time Streaming and WebSockets

Modern AI applications require more than just a static JSON response; they require real-time feedback loops. Motia includes a native "streams" capability that uses WebSockets to deliver updates to connected clients.2 When a Step writes a message to a stream—for instance, a chunk of text generated by an LLM—the framework automatically pushes that update to any frontend client subscribed to that stream ID.3

Motia provides a specialized React client library (@motiadev/stream-client-react) that simplifies the process of consuming these updates.2 This creates a "typewriter" effect in the UI without requiring the developer to manage WebSocket connections, heartbeat logic, or subscription grouping manually.3 This is essential for building engaging AI chat apps and real-time financial dashboards.2

Comparative Taxonomy: Motia vs. The Landscape

To understand Motia’s importance, one must analyze its position relative to both traditional web frameworks and modern durable execution engines.

Structural Comparison with Web Frameworks

Traditional frameworks like NestJS or Next.js are primarily focused on the "request-response" cycle and the organization of code structure. Motia, however, is an "infrastructure-first" framework.12

| Feature | NestJS (Standard) | Next.js (Full Stack) | Motia Dev |

|---|---|---|---|

| Architecture | Modules/Providers | File-based Routing | The Step Primitive |

| Async Tasks | Needs BullMQ/Redis | Needs Inngest/Temporal | Native/Built-in |

| Polyglot | Single language (TS) | Single language (TS) | Native TS + Python |

| State | External DB/Cache | External DB/Cache | Integrated K-V State |

| Observability | External (OpenTelemetry) | Vercel Analytics | Integrated Workbench |

NestJS provides a rigorous structure for large teams but requires significant boilerplate to add a queue or a worker.12 Next.js provides excellent full-stack capabilities for UI-driven apps but struggles with long-running tasks due to serverless timeouts.12 Motia serves as the "modern glue," removing the distinction between an API and a worker and allowing for a "flat" architecture where logic takes precedence over boilerplate.12

Taxonomy of Durable Execution Engines

The landscape of durable execution—defined by the guarantee that code will run to completion—is dominated by players like Temporal, Inngest, and Trigger.dev.20

| Platform | Model | Deployment Complexity | Best For |

|---|---|---|---|

| Temporal | Centralized Orchestration | High (Multi-service cluster) | Mission-critical, massive scale |

| Inngest | Event-Driven Choreography | Low (HTTP-based/Serverless) | Event-heavy serverless apps |

| Trigger.dev | Integration-Focused | Moderate (Task-based) | API-heavy TS workflows |

| Motia Dev | Unified Backend Framework | Low (Single iii engine binary) | AI-heavy polyglot apps |

Temporal is the battle-tested giant of the industry, used by companies like Netflix for industrial-strength reliability.27 However, it requires a dedicated platform team to manage its complex infrastructure and demands a high learning curve for developers to understand its "deterministic" coding constraints.20 Inngest offers a more approachably "serverless-first" model using HTTP calls, which eliminates the need for persistent workers but can lead to unpredictable pricing as every step is a billable event.20

Motia distinguishes itself by being a complete backend framework rather than just an orchestration layer.4 While Inngest and Trigger.dev can be added to an existing project, Motia is the project. It provides the API routes, the state, the streams, and the workers in one cohesive unit, making it significantly faster for greenfield AI projects where rapid iteration is more important than the "industrial" scale of a Temporal cluster.2

AI Agents and the Future of Orchestration

The integration of AI into the backend is the primary driver behind Motia’s design. As Piccolo identifies, the "Full Stack" in 2026 is no longer about just React and Node; it is about the orchestration of agents.8

Agentic Patterns in Motia

Building AI agents requires managing state over long durations and handling the non-deterministic nature of LLM responses.8 Motia supports several advanced agentic patterns:

- Human-in-the-Loop: A Step can perform a task (like generating a marketing campaign), save the draft to the state, and then "wait" for an external event (like a Slack approval) to proceed to the next Step.31

- Tool Calling and ReAct: Using Python Steps, developers can build agents that iteratively call different "tools" (other Steps) to solve a problem, with the framework automatically tracking the "thought process" through its observability layer.17

- Multi-Modal Flows: Agents that process PDFs (using Docling), images, and text can be coordinated across language runtimes, with the output streamed token-by-token to the client for a responsive feel.14

AI-Assisted Development

Motia further differentiates itself by being "AI-native" in its development workflow. Every Motia project includes detailed AI development guides in a format compatible with tools like Cursor, GitHub Copilot, and Claude Code.6 These guides include optimized .mdc rules that allow the AI coder to understand the Motia Step pattern, the event-driven architecture, and the state management system.6 This ensures that when a developer asks an AI to "build a new feature," the AI produces code that adheres to the framework’s specific architectural requirements.6

Operational Strategy: The Workbench and Deployment

The developer experience of Motia is anchored by the Workbench, a local UI that provides a visual dashboard for building and debugging.4

The Workbench as a Dev Superpower

The Workbench provides a real-time view of the system that is traditionally missing in backend development. It allows developers to:

- Visualize Workflows: See the entire application as an interactive diagram, revealing how Steps are connected and where bottlenecks may occur.3

- Trace Executions: Track a single request across multiple Steps, inspecting the inputs, outputs, and logs at every stage of the lifecycle.12

- Inspect State: Browse the global key-value store in real-time to see how data is changing as the application runs.13

- Manual Triggers: Manually fire an event or an API call to test a specific Step without having to run a full end-to-end integration test.12

This level of integrated observability is a "game-changer" for rapid iteration, particularly for AI agents where the execution path can be highly dynamic.12

Deployment and Scale

Motia is designed to be "deploy-anywhere" with zero vendor lock-in.24 The framework is open-source and free to self-host on any infrastructure (AWS, GCP, Azure).18 For teams seeking a managed experience, Motia Cloud provides an instant, production-ready environment that handles auto-scaling, updates, and atomic deployments.18

| Deployment Mode | Infrastructure | Benefit |

|---|---|---|

| Self-Hosted | Private (AWS/GCP/Azure) | Maximum control and compliance |

| Motia Cloud | Managed by Motia | Speed, auto-scaling, zero-config |

| BYOC | Managed Layer + Private Infra | Data sovereignty with managed convenience |

A critical feature of the Motia Cloud is its support for "Atomic Deployments," where each version of the application gets its own isolated infrastructure and message queues.18 This allows teams to roll back instantly if a change in data structure breaks in-flight messages, a common hazard in event-driven systems.1

Conclusion: The Strategic Importance of Unified Orchestration

The Motia framework represents a significant evolution in the philosophy of backend development. By rejecting the fragmented, "glued-together" model of the past, Motia offers a vision of a unified system that is fundamentally simpler to build, observe, and scale. The framework’s success is predicated on three key strategic pillars:

First, the "Step" primitive successfully collapses the arbitrary distinctions between APIs, jobs, and workflows. This unification allows for a more holistic way of reasoning about software, where the developer focus shifts from managing infrastructure to authoring business logic. The ability to refactor a system's behavior by simply changing a configuration file is a powerful tool for maintaining architectural agility.

Second, the polyglot nature of the framework addresses the primary engineering challenge of the AI era. By creating a high-performance Rust bridge between the TypeScript and Python ecosystems, Motia enables the creation of "intelligent backends" that were previously the domain of large organizations with massive platform teams. The fact that a five-person company can now orchestrate complex, multi-language AI agents with the same ease as a basic REST API is a testament to the framework's power.

Third, the framework acknowledges that in an era of zero-cost software generation, resilience and observability are the only remaining forms of sustainable leverage. Mike Piccolo’s emphasis on "system fragility" and "AI uptime" highlights the reality that as we delegate more logic to stochastic models, the underlying infrastructure must be more robust than ever. Motia provides this robustness not through external additions, but by making durability a native property of the runtime itself.

As backend engineering continues its journey toward autonomous, agentic systems, the importance of a unified foundation cannot be overstated. Motia is not just a tool for building faster; it is a framework for building the resilient, intelligent systems that will define the next generation of the internet. For the modern engineer, mastering the orchestration of these "Steps" is the key to thriving in the post-fragmentation era.

Works cited

- Manifesto - Motia, accessed March 4, 2026, https://motia.dev/manifesto

- Motia: make backend development boring (and that's the point) | by ..., accessed March 4, 2026, https://marcin-codes.medium.com/motia-make-backend-developement-boring-and-thats-the-point-b767a1e74eda

- Motia: make backend development boring (and that's the point), accessed March 4, 2026, https://dev.to/marcin_codes/motia-make-backend-development-boring-and-thats-the-point-3afj

- Motia.dev, Modern Backend Framework that unifies APIs, accessed March 4, 2026, https://thinkthroo.com/blog/motiadev-modern-backend-framework-that-unifies-apis-background-jobs-workflows-and-ai-into-a-single-cohesive-system

- Motia - Code-First Backend Framework for APIs & AI Agents, accessed March 4, 2026, https://www.motia.dev/

- MotiaDev/motia: Multi-Language Backend Framework that ... - GitHub, accessed March 4, 2026, https://github.com/MotiaDev/motia

- Motia - GitHub, accessed March 4, 2026, https://github.com/MotiaDev

- The New "Full Stack" in 2026 | Mike Piccolo founder of Motia Dev, accessed March 4, 2026, https://www.youtube.com/watch?v=aUrs65tgjiA

- Backend Reloaded - WeMakeDevs, accessed March 4, 2026, https://www.wemakedevs.org/hackathons/motiahack25

- The Founder`s Code - Apple Podcasts, accessed March 4, 2026, https://podcasts.apple.com/de/podcast/the-founder-s-code/id1797768317

- Mike Piccolo - Founder at motia - The Org, accessed March 4, 2026, https://theorg.com/org/motia/org-chart/mike-piccolo

- Motia.dev: The Unified Backend Framework for the AI Era - Medium, accessed March 4, 2026, https://medium.com/@MakeComputerScienceGreatAgain/motia-dev-the-unified-backend-framework-for-the-ai-era-71f91f692403

- The Backend Finally Makes Sense, Why I'm Loving Motia, accessed March 4, 2026, https://levelup.gitconnected.com/the-backend-finally-makes-sense-why-im-loving-motia-5c52acc0f749

- Motia: The Developer's Secret Weapon for Building Smarter AI Agents, accessed March 4, 2026, https://www.oreateai.com/blog/motia-the-developers-secret-weapon-for-building-smarter-ai-agents/c70171813320c889731c3829c6ff6c49

- Overview | Motia Docs, accessed March 4, 2026, https://www.motia.dev/docs/concepts/overview

- Welcome to Motia | Motia Docs, accessed March 4, 2026, https://www.motia.dev/docs

- MotiaDev/motia-examples - GitHub, accessed March 4, 2026, https://github.com/MotiaDev/motia-examples

- Motia Cloud, accessed March 4, 2026, https://motia.dev/motia-cloud

- Reliable data processing: Queues and Workflows - Temporal, accessed March 4, 2026, https://temporal.io/blog/reliable-data-processing-queues-workflows

- Inngest vs. Temporal: Which one should you choose? - Akka, accessed March 4, 2026, https://akka.io/blog/inngest-vs-temporal

- Rewrite our Core in either Go or Rust · Issue #482 · MotiaDev/motia, accessed March 4, 2026, https://github.com/MotiaDev/motia/issues/482

- Multi-Language Development | Motia Docs, accessed March 4, 2026, https://www.motia.dev/docs/advanced-features/multi-language

- How to Implement State Machines in Rust - OneUptime, accessed March 4, 2026, https://oneuptime.com/blog/post/2026-02-01-rust-state-machines/view

- GitHub - MotiaDev/motia: Unified Backend Framework for APIs, accessed March 4, 2026, https://www.reddit.com/r/opensource/comments/1lx4q7b/github_motiadevmotia_unified_backend_framework/

- State Management | Motia Docs, accessed March 4, 2026, https://www.motia.dev/docs/development-guide/state-management

- Inngest vs Temporal: Durable execution that developers love, accessed March 4, 2026, https://www.inngest.com/compare-to-temporal?ref=footer-links

- Inngest: The Event-Driven Platform for Reliable Workflows and AI, accessed March 4, 2026, https://javascript.plainenglish.io/demystifying-inngest-the-event-driven-platform-for-reliable-workflows-and-ai-orchestration-388dc80c03af

- Durable Workflow Platforms for AI Agents and LLM Workloads, accessed March 4, 2026, https://render.com/articles/durable-workflow-platforms-ai-agents-llm-workloads

- Has anyone used Temporal.io for production? : r/ExperiencedDevs, accessed March 4, 2026, https://www.reddit.com/r/ExperiencedDevs/comments/1ph8gru/has_anyone_used_temporalio_for_production/

- The Ultimate Guide to TypeScript Orchestration: Temporal vs, accessed March 4, 2026, https://medium.com/@matthieumordrel/the-ultimate-guide-to-typescript-orchestration-temporal-vs-trigger-dev-vs-inngest-and-beyond-29e1147c8f2d

- Building AI Content Moderation with Human-in-the-Loop Using, accessed March 4, 2026, https://blog.motia.dev/building-ai-content-moderation-with-human-in-the-loop-using-motia-slack-and-openai/

- how does inngest compare to temporal? - Hacker News, accessed March 4, 2026, https://news.ycombinator.com/item?id=36697475

- AI Development Guide | Motia Docs, accessed March 4, 2026, https://www.motia.dev/docs/ai-development-guide

- Hosting - Motia, accessed March 4, 2026, https://motia.dev/hosting